Perfection is seductive, and brittle. Popper reminds us progress comes from refutation, not applause; physics reminds us closed systems drift toward disorder. The scientific method operationalizes both: expose assumptions, hunt errors, revise.

Stability requires feedback and self‑correction. Any viable system, team, product, or career, must detect errors, learn, and adjust faster than conditions change. Closed systems chase certainty, suppress dissent, and decay. Adaptive systems expose assumptions, run tests, and revise continuously, trading the comfort of perfection for the durability of survivability.

Name the trap: certainty theater

You can feel it in the room: plans presented as finished, dissent framed as delay, bad news saved for later. It looks confident. It isn’t stable.

Closed systems are alluring because they reduce anxiety. But the cost is compounding blindness. When teams optimize for being right, they punish the very signals that would keep them correctable. Over time, that either decays into quietly missing the market or snaps into top‑down control to preserve the illusion of certainty.

A quick diagnostic: if the last three misses were explained away rather than examined, you’re running a closed loop. The fix isn’t “more data.” It’s different posture: prefer being corrigible to being convincing.

Example: A quarterly plan celebrates on‑time delivery while churn rose. A corrective stance would ask, “What would have to be true for this roadmap to be wrong?” and then instrument those risks upfront.

Use Popper’s test on plans

Short scene: a roadmap review where every slide supports the decision. It feels tight. It’s unfalsifiable.

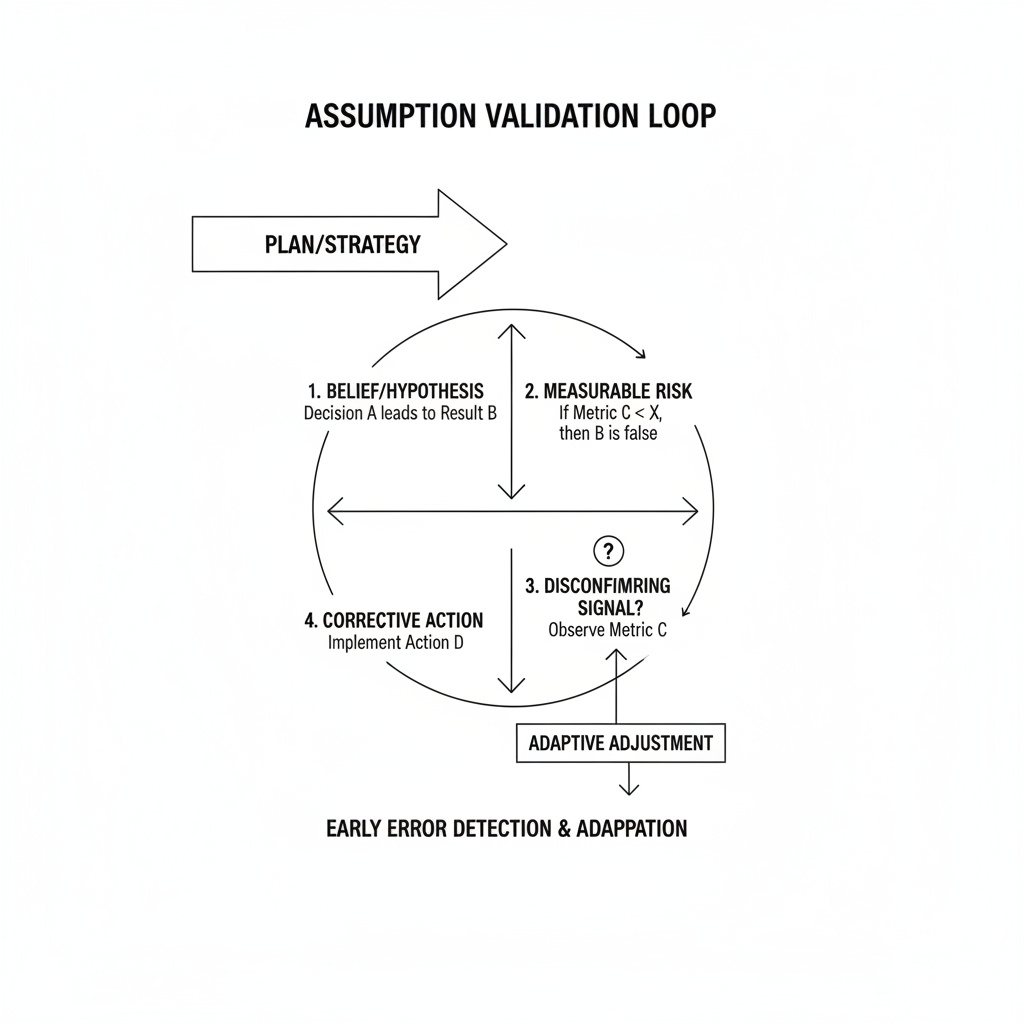

Popper’s point was simple: useful ideas can, in principle, be proven wrong. Treat your strategy the same way. For every commitment, name a disconfirming signal and a pre‑agreed change you’ll make if that signal shows up.

“Convert belief to a bet: ‘We believe onboarding friction is the main driver of churn.’ State a measurable risk: ‘If first‑session completion doesn’t improve within 30 days of change X, our belief is likely wrong.’ Pre‑commit a correction: ‘If wrong, we pause new features and run 5 loss interviews within 72 hours.'”

Mini‑case: I once inherited a program that “had to work” because an exec had sponsored it. We added one falsifier, “If NPS falls for new users in two consecutive weeks, we stop rollout.” It tripped in week two. We halted, shipped a small fix, and recovered within 10 days. Pride took a hit. Trust increased.

Build feedback loops that bite

Start small, shorten cycles, and make correction cheap. A loop “bites” when it turns a vague concern into a routine adjustment, fast.

Make retros time‑boxed and rhythmic: a 30‑minute weekly review that inspects one decision, one outcome, one change. Keep blame out; keep decision patterns in. Instrument a disconfirming signal per bet: one metric, one behavior, or one qualitative pulse. Don’t drown in dashboards. Shrink the unit of change: ship one reversible move you can observe within 24–48 hours. Speed isn’t theater; it’s a safety feature that makes learning affordable.

Example: Instead of rebuilding onboarding, add a single prompt that addresses the most common stuck point; measure first‑session completion and the top support tag for a week; decide go, revert, or iterate.

Show mature proof without hype

A credible culture doesn’t celebrate certainty; it publishes learning. This is how you avoid “trust me” leadership while keeping momentum.

Publish decision logs: one paragraph on the choice, the bet behind it, the disconfirming signal, and the next check‑in. Keep it visible. Run blameless post‑incident reviews within 48 hours: focus on triggers, detection lag, and how the work made the error possible. Reward the first detector. Share loss learnings: five short notes on why customers left, what you’d try next, and what you’ll stop doing. No villains; just mechanisms.

Example: An engineering team noticed most incidents were self‑inflicted within an hour of deploy. They added a 60‑minute “watch window” with on‑call pairing after each release. Incidents dropped and, more importantly, detection time collapsed. Stability improved by improving correction speed.

What smart peers need to trust you

Peers don’t need you to be right. They need to see how you get back to right. Make the mechanism legible.

State the category, the working mechanism, and the intended outcome in one clear line. Then show the smallest run where it held up. Expose your expertise map: the few decisions you make repeatedly under constraints. That’s what colleagues bank on when the plan meets reality. Offer one visible correction story: “We thought X, we watched Y, we changed Z, and here’s what moved.” Short, concrete, no self‑congratulation.

Micro‑example: “Hiring: We thought speed beat fit. After two miss‑hires, we added a 20‑minute work sample and a post‑interview calibration within 24 hours. Time‑to‑offer rose by two days; ramp time fell by three weeks.” That’s clarity over charisma, and the kind of authority artifact that travels.

Hold the core, change the rest

Here’s the fear: if we open the door to constant feedback, we drift. That happens when you treat everything as provisional. Don’t. Protect a stable core, mission, values, quality bar, and make everything else testable.

Three guardrails keep openness from becoming chaos. Name the non‑negotiables: customer promise, safety standards, and the few rules that maintain trust. Don’t A/B test integrity. Set decision horizons: daily for tactics, monthly for strategy, annual for mission. You can review faster in a crisis, but announce when you’re centralizing and for how long. Cap concurrent bets: two or three falsifiable hypotheses per team at any time. Focus is also a feedback mechanism; it raises the signal‑to‑noise ratio.

“Stability is not the absence of change; it’s the presence of correction. Power is not control over information; it’s fluency with being wrong in public. Confidence is not loud conviction; it’s the quiet habit of returning to reality faster than others.”

Example: During a market wobble, one team centralized decisions for two weeks to protect cash and paused new bets. They kept daily check‑ins to re‑open quickly. Temporary closure served stability because re‑opening was explicit.

Closed systems decay or harden into authoritarian habits. Adaptive systems survive because they make error detection more valuable than being right the first time. If you organize your work so that dissent is data, correction is routine, and revision is inexpensive, stability stops being a mood and becomes a practice.