Intelligence isn’t brightness; it’s upkeep. Your brain isn’t a library storing facts, it’s a workshop where you build, test, and rebuild explanations that predict reality.

Stop hoarding facts

I used to pride myself on answering fast. It felt like competence. Then a simple pricing change knocked my “expert take” apart in a week.

Knowing more can help, but it’s fragile if it isn’t organized into a testable explanation. Facts are snapshots; intelligence is the camera, how you form, aim, and refocus the lens. The trap is mistaking recall for reasoning. You see it when a “best practice” survives long after its variables have moved.

Popper warned that strength comes from surviving attempts to be proven wrong. Treat your view as a draft, not doctrine.

Consider this micro-example: Your team blames churn on onboarding length. You cut steps and nothing changes. A first-principles pass exposes the real driver: misfit pricing tiers. The fix isn’t faster tutorials; it’s a clearer value ladder.

Practice first principles thinking

In physics class, the hardest questions weren’t recall; they were derivation. Feynman insisted you understand from the ground up, start at what must be true, then build. This approach reduces the search space by anchoring on truths rather than habits, helping you see more optionality and stop copying outcomes to start rebuilding mechanisms for your specific constraints.

Here’s a simple protocol to ground your thinking:

- Write the irreducibles: What’s undeniably true about the problem? Keep it to 3–5 statements.

- Map causes to effects: Draw a line from principle to prediction.

- Price your uncertainty: Where are you guessing? Mark those as bets.

Take this example: You’re asked to “grow enterprise leads.” Your irreducibles might be: (1) ICP buys on risk reduction; (2) trust precedes outreach; (3) cycles are long. Your prediction: better case studies beat more ads. Your bet: one flagship proof beats five minor anecdotes. Now you can test.

Revise fast in public

Even the cleanest build meets noise. That’s the point. Popper’s bar is simple: try to break your own idea before the world does.

I once argued a quarterly plan hinged on “sales enablement” gaps. Two weeks of shadowing calls showed the blocker wasn’t decks; it was authority, our reps couldn’t cite a single proof that mapped to CFO risk. We rewrote two cases, dropped three features from the pitch, and win rates moved within a month. The model didn’t fail; my assumption did.

When reality pushes back, compare prediction versus outcome in one page and highlight the miss, not the excuse. Locate the wrong assumption rather than rebuilding the whole structure, replace one beam, don’t tear down the house. Then publish the revision note because a short update builds credibility by showing how you learn.

Intelligence is the ongoing loop of reducing problems to first principles and revising your mental models through error correction.

Consider this revision in action: You predicted “self-serve conversions will lift with a shorter trial.” Conversions fell. Post-mortem shows fear-spike at purchase. Your revision: extend trial but add usage gating to surface value early.

Make your value visible

You do this loop already. The problem is your value stays implicit. A smart peer can’t see how you think; they only see artifacts after the fact.

Turn implicit reasoning into public signal by writing decision memos that start with first principles, then list bets, keep it to 500–800 words. Share “assumptions to outcomes” threads as an authority surface: one assumption, how you tested it, what changed. Maintain an expertise map covering 5–7 problems you solve repeatedly, with the decision patterns you reuse.

This isn’t about performance; it’s clarity over charisma. When your narrative shows how you reason under constraints, your claims become defensible.

Run one weekly test

Big transformations come from small, repeated updates. Think of it as a two-step loop you run every week that changes your decision patterns without stalling execution.

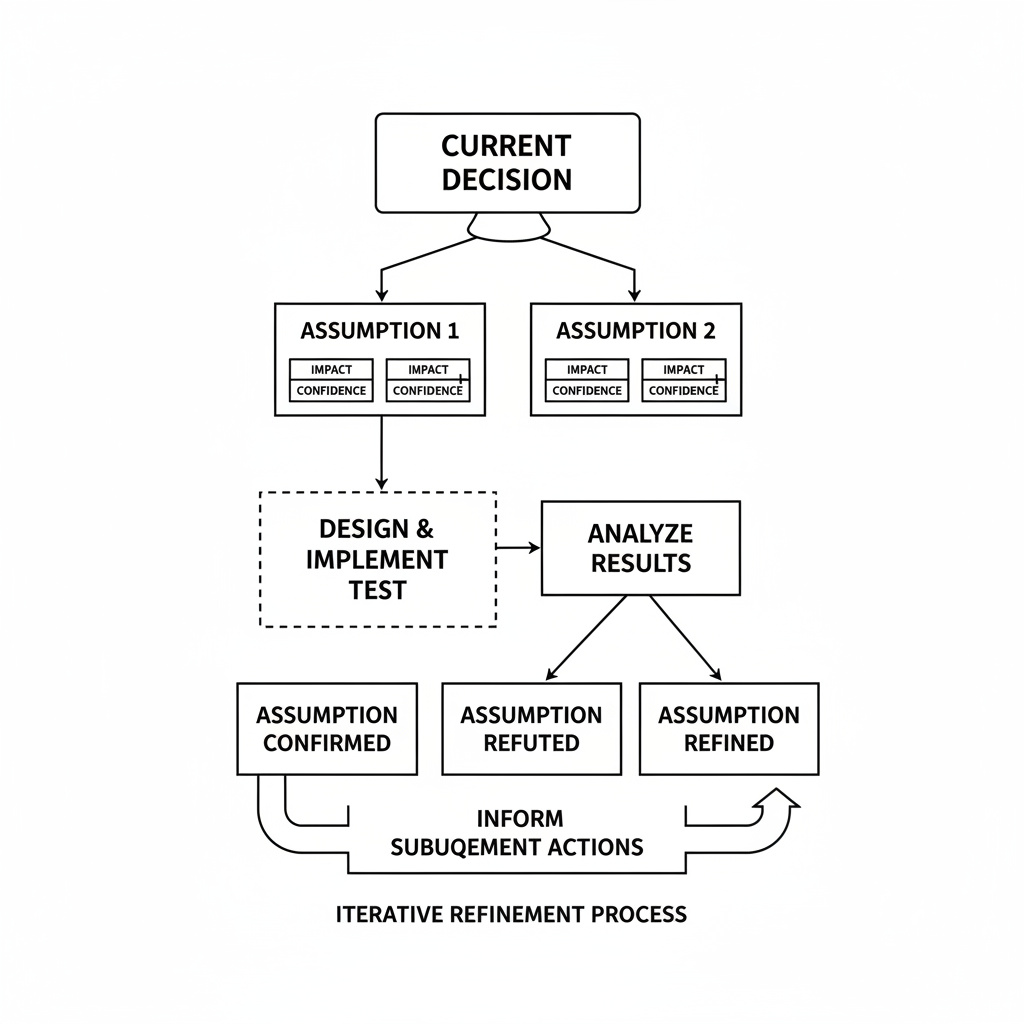

On Monday, spend one hour naming two assumptions behind a current decision and label each as “high impact / low confidence” or not. Design a thin test for exactly one assumption and ship it by Wednesday. On Friday, write a 150-word update asking: Do results confirm, refute, or narrow the range? What changes next week?

Three numeric anchors keep you honest: one hour to set up, one test per week, and a 90-day window to see pattern shift. Here’s how it works: You assume “discounts close deals at quarter-end.” An A/B test with and without discounts shows same close rate but higher refund risk. Your revision: kill blanket discounts and add a value-based add-on instead.

Check for defensibility

Before you publish a decision or ship a plan, run a short gate. It’s a calm pause, not a brake. Ask yourself: Is there a falsifiable claim? If not, add one. Did you state at least one constraint? If not, you might be hand-waving. What would change your mind? Name the disconfirming signal now.

This quality test keeps your story sturdy under pressure and prevents analysis paralysis. It turns humility into a tool.

Intelligence isn’t brightness; it’s upkeep. First principles give you bedrock. Revision gives you drainage. Together, they produce judgment that survives weather. Facts still matter, but they’re lumber. The craft is in what you build, how you test it, and how quickly you’re willing to rebuild when the grain runs the other way.