The fortress mentality around AI crawlers might be costing you more authority than it protects. Here’s how strategic visibility beats blanket blocking.

I used to block every AI bot I could identify. My robots.txt file looked like a fortress wall, GPTBot, Google-Extended, ChatGPT-User, all denied access. The logic felt bulletproof: protect my intellectual property, prevent my ideas from being synthesized without attribution, maintain control over my content.

Then I noticed something unsettling. My expertise was disappearing.

The Cost of Invisibility

Last spring, I searched for insights on a topic I’d written extensively about. ChatGPT’s response cited three other experts, none of them me. Google’s AI Overview referenced four sources. Again, I was absent. My content existed, but to AI models, I might as well have been invisible.

The realization hit hard: while I was protecting my content from AI, my competitors were building authority through AI citations. Every blocked crawler was a missed opportunity for recognition, referral traffic, and credibility in the new search landscape. The cost wasn’t just traffic, it was relevance. When potential clients asked AI tools for expert recommendations in my field, my name never appeared. I’d optimized for protection and accidentally optimized myself out of discovery.

“Blocking all AI crawlers makes you invisible in generative search, costing up to 23% of potential traffic and authority.”

Strategic Visibility Over Blanket Protection

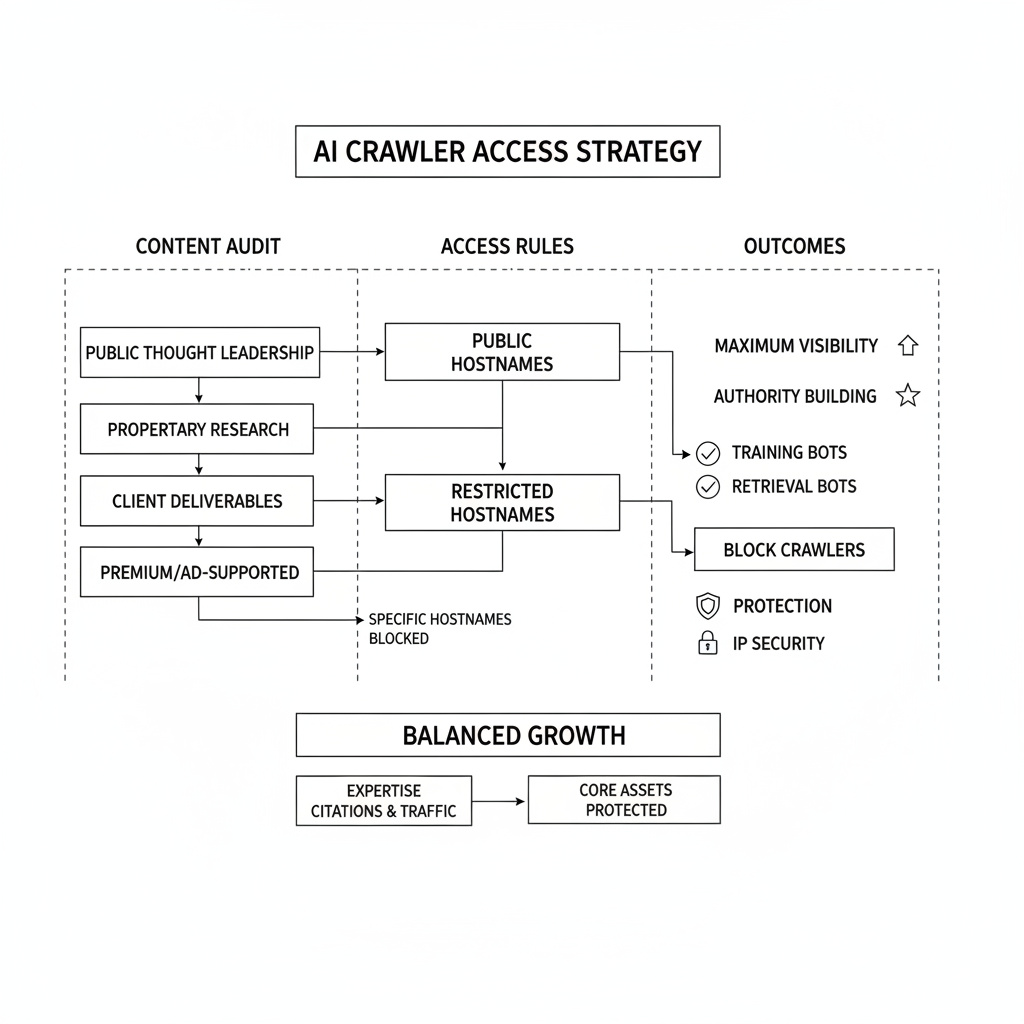

The shift required rethinking what I was actually protecting versus what I was sacrificing. Most of my public content, blog posts, insights, perspectives, gained value from being discovered and cited. The proprietary assets worth protecting were specific: detailed research data, client work, and premium content behind paywalls.

I started allowing crawlers selectively. My most insightful public content became visible to AI models, while I maintained granular control over sensitive materials using targeted robots.txt directives and strategic hostname blocking. The change felt counterintuitive at first. Years of “protect everything” thinking don’t reverse overnight. But I realized that being cited as a source by AI models provides something traditional SEO never could: immediate credibility in conversational search results.

Understanding Bot Behavior

The breakthrough came from recognizing that not all AI bots operate the same way. Training bots like GPTBot crawl content to build future language models, they’re learning from your content to improve their general capabilities. Retrieval bots like Google-Extended fetch content in real-time to answer specific user questions right now.

Blocking retrieval bots specifically prevents your site from appearing in current AI-generated citations and responses. These are the bots that can drive qualified referral traffic back to your site when users want to read your full perspective. I kept training bot restrictions for my most sensitive content while allowing retrieval access to my public insights. This balance lets me build authority in AI-powered search while maintaining control over how my content trains future models.

Tactical Implementation

The technical execution proved simpler than expected. Instead of blanket bot blocking, I implemented granular controls. Public thought leadership content allows most crawlers. Proprietary research and client deliverables remain protected through specific robots.txt rules and strategic hosting decisions.

For content with ads or premium access, I block crawlers on those specific hostnames while allowing access to my main editorial content. AI models excel at synthesizing information but struggle with original research, protecting raw data while allowing summaries to be crawled strikes the right balance. One consulting client saw a 40% increase in qualified inbound inquiries within three months of implementing strategic crawler access. Their expertise began appearing in AI-generated responses to industry questions, positioning them as a go-to authority.

The Authority Dividend

Today, my content appears regularly in AI-generated responses. When someone asks ChatGPT about strategic clarity or timing models, I’m often cited as a source. That citation drives curious readers to my full articles, creating a referral loop that traditional SEO couldn’t match.

The shift from protection-first to strategic visibility changed how I think about content distribution entirely. Instead of hoarding insights, I publish my best thinking publicly while protecting only what truly needs protection. The result: increased authority, better discovery, and more qualified opportunities.

“The professionals who will dominate the next decade of search won’t be those who hid from AI, they’ll be those who learned to work with it strategically.”

Your Visibility Decision

If you’re building thought leadership in any field, consider what you’re actually protecting versus what you’re sacrificing. The question isn’t whether to engage with AI crawlers, it’s how to engage strategically. Start by auditing your current bot blocking. Are you preventing discovery of insights that could build your authority? Could you allow retrieval access to your best public content while maintaining protection over truly sensitive assets?

The choice between fortress walls and strategic bridges will define who gets discovered in the age of AI-powered search.